Cyber drinking experience with hands gesture

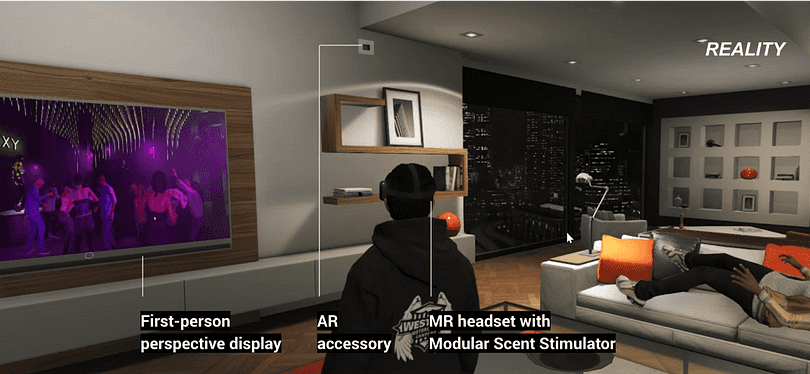

A conceptual experience design for the future in-home clubbing experience. By using the post-human-centered design framework to explore the opportunities created by new technologies. Here we choose Mixed Reality to express the story. Then I explore the expected hand interactions when wearing headsets in the imagined future sceneraio.

#1 place in Master Challenge 2020 ID X Verizon

Part1: Concept Design

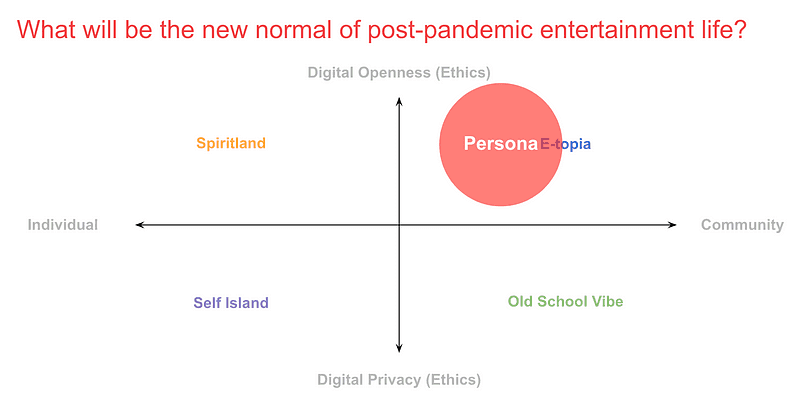

People will adapt to the new normal eventually due to COVID -19. The work-from-home policy shapes the people and they start to embrace new technologies in the digital era, and value community more than ever. Society has been seeking a way out from the economic shutdown that complements the entertaining aspect of people’s lives. What can we do for the usual entertainment if we have to live with the virus?

Part2: Interaction Prototype

With the proposed scenario, how would people interact with the system? This involves object manipulation in mixed reality. The question will be how to optmize the interaction flow so that the operations won't bother the whole clubing experience?

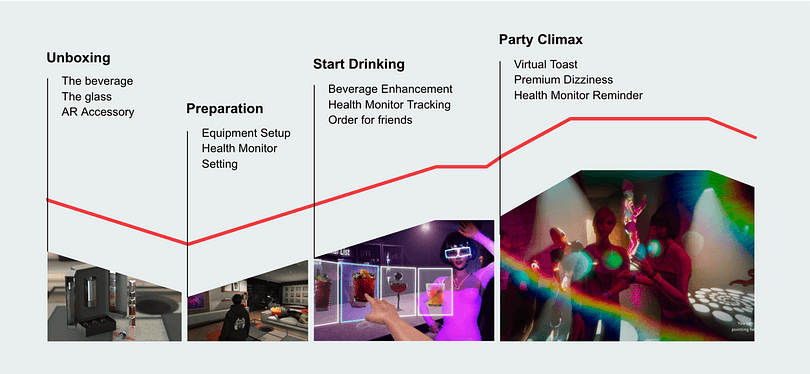

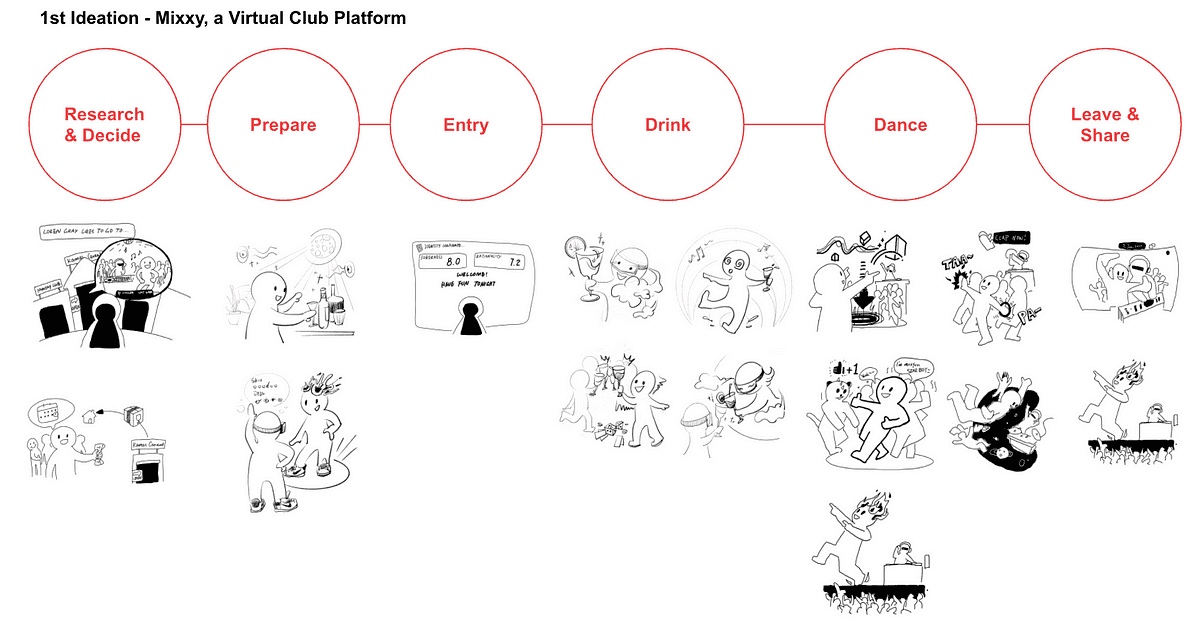

Concept Design: User Journey in Mixxy

Entire user journey from unboxing to party climax when using Mixxy

Unboxing--users will get the packages and set up their home environment with all the sensors we provide to capture their movement and feelings.

Method & Process

Post-Human-Centered Design Method

A post-human-centered design approach recognizes that people and their technology are an integral part of the constantly evolving socio-technical system we live and work with and through. We need to incorporate new methods we can use to:

- expand beyond the individualized focus of HCD

- emphasize emergent behavior of systems

- explore multiple future outcomes and externalities

- enable live adaptation of through design approaches

The Challenge of human-centered design:

A focus on the individual "user," their "problems," and "needs" is necessary but no longer sufficient for the increasingly complex, interdependent socio-technological environment we are working and living within. We need to evolve our approach to accommodate our partnership with software-driven products and their use as an extension of ourselves—products we act through, not just with.

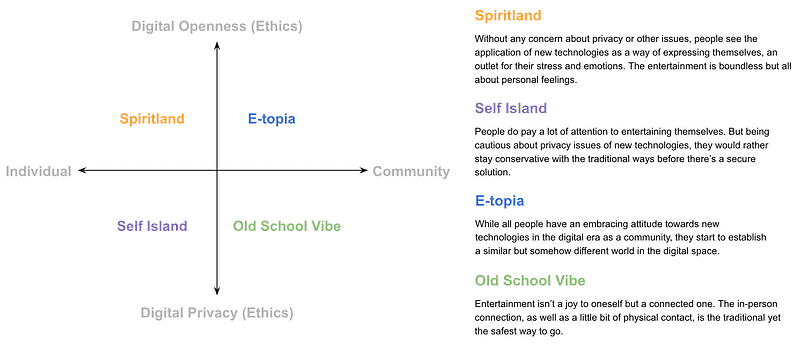

Step 1: Understanding Context

Scenario planning based on social impact & Desk research-emerging technologies & Current solutions for an immersive social experience

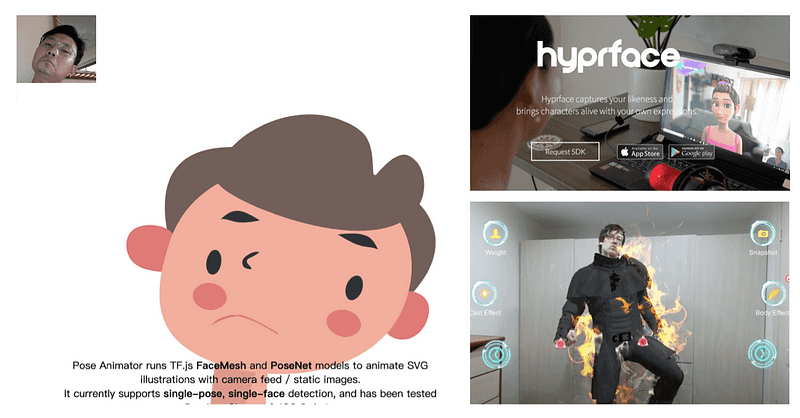

we began with a 2×2 scenario planning approach to explore how social and digital preferences might shape future user groups. Our desk research uncovered rising enthusiasm for virtual events—driven by cheaper VR setups, AR headsets, and robust examples of online concerts and VR-based gatherings. We then examined current immersive social platforms (e.g., Facebook Horizon, Altspace, Mozilla Hubs), concluding that a user’s ability to translate real-world movements into digital form easily is key to a fully expressive virtual experience. Ultimately, single-camera technology (like a phone webcam) emerged as a promising, accessible tool for enabling these interactions.

Step 2: Generative Research

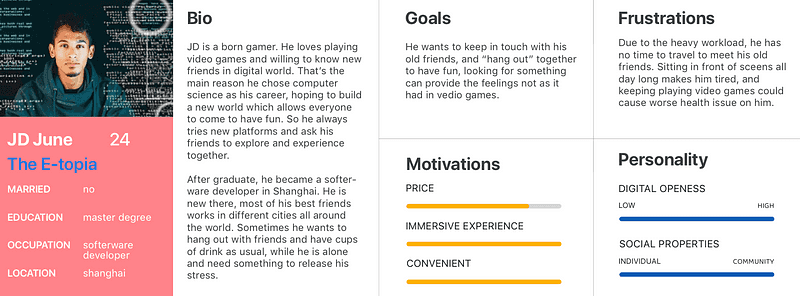

User Interview & Persona

we narrowed our target to “E-topia” users—those open to novel digital experiences and eager for large-scale social engagement. We interviewed six individuals from varied backgrounds (DJs, clubbers) to explore their expectations for virtual entertainment. Insights included viewing virtual events as a distinct, not replacement, experience that should leverage heightened sensory elements (smell, touch), uphold essential club “props,” and emphasize social connectivity. These findings shaped our persona (JD June, 24), illustrating someone who seeks immersive yet convenient ways to socialize remotely in a post-COVID world.

Step 3: Synthesize Ideas

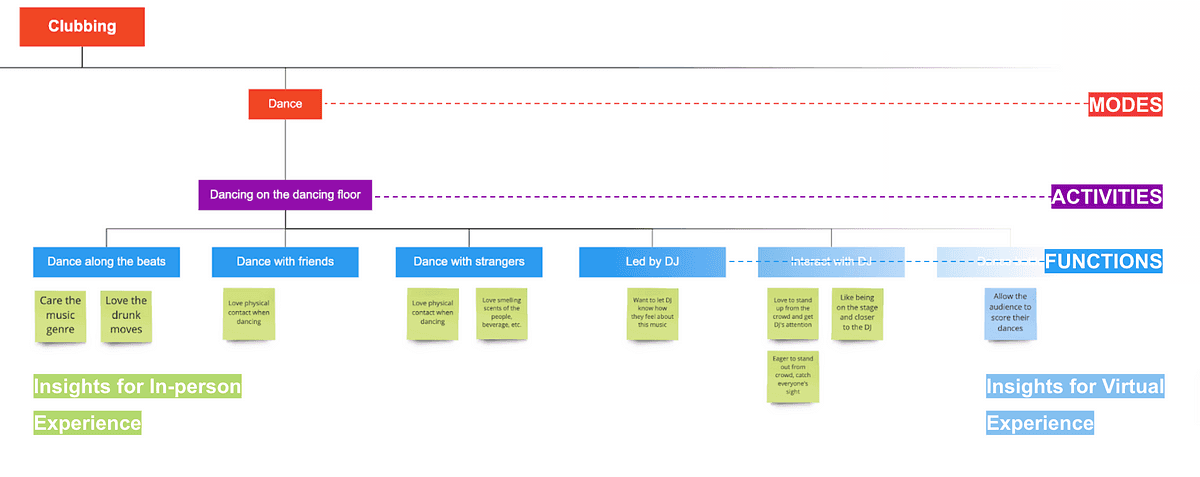

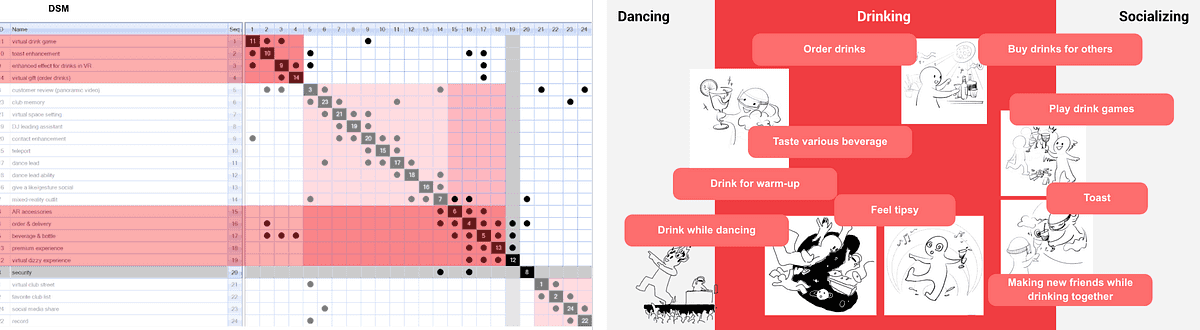

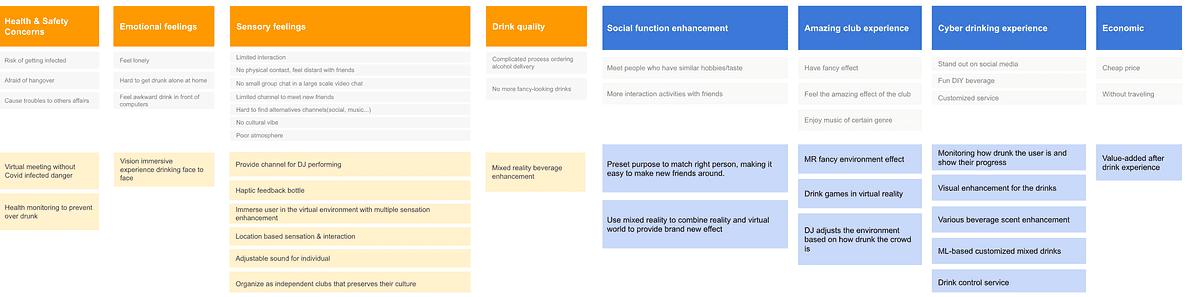

Structured Planning & Storyboard & DSM narrow down the idea & Value proposition canvas

we used a 5E framework to examine all aspects of in-person clubbing and identify opportunities to enhance or add features for a more immersive virtual experience. A storyboard helped visualize how our platform—“Mixxy”—might operate. Next, we employed a Dependency Structure Matrix (DSM) to prioritize key functions—dancing, drinking, and socializing—while retaining as many relevant features as possible. Finally, a Value Proposition Canvas allowed us to delve into users’ emotional and sensory needs, pinpointing the critical design touchpoints to refine our concept.

Interaction Prototype: Hand interaction

Motivation

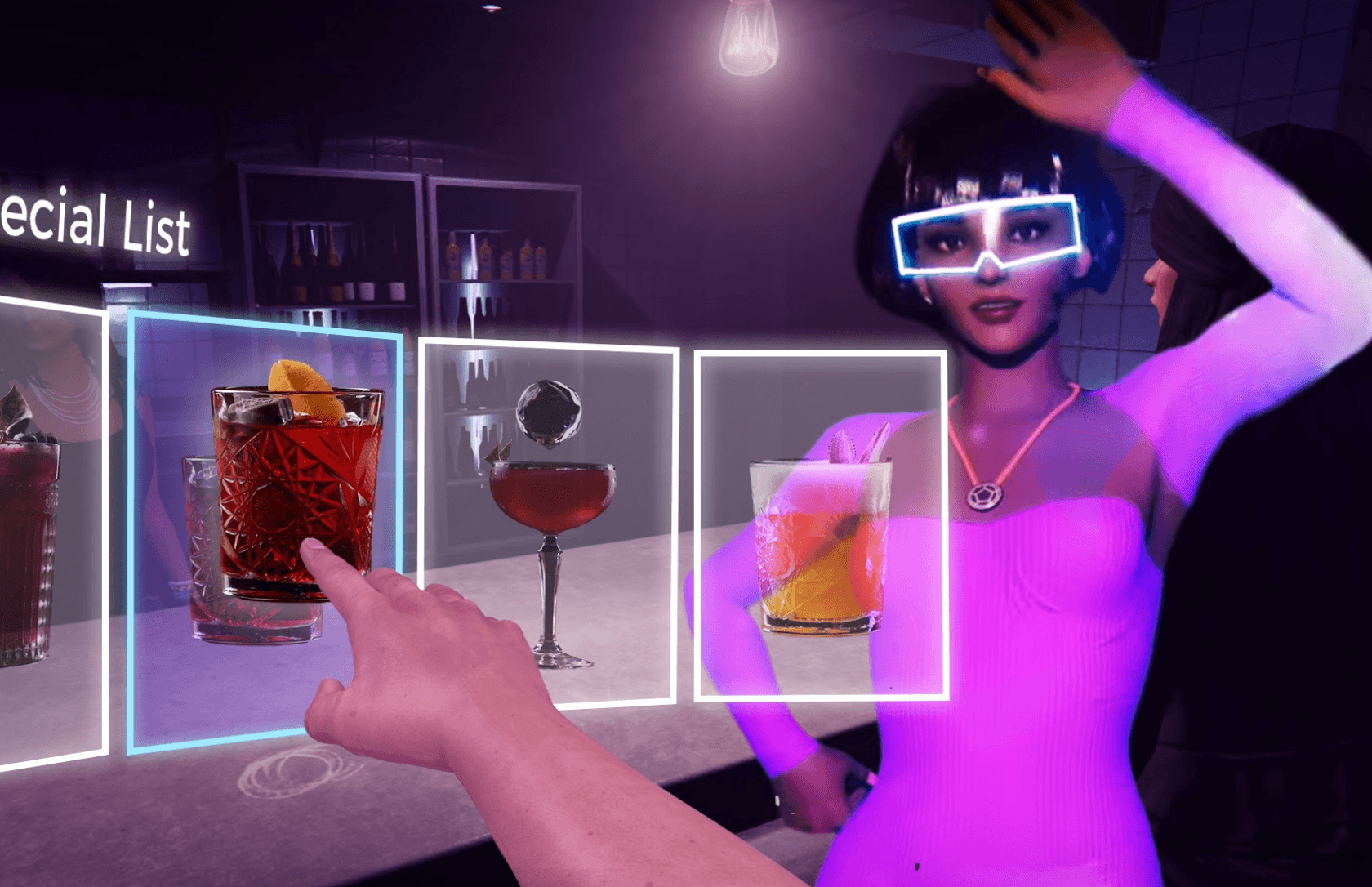

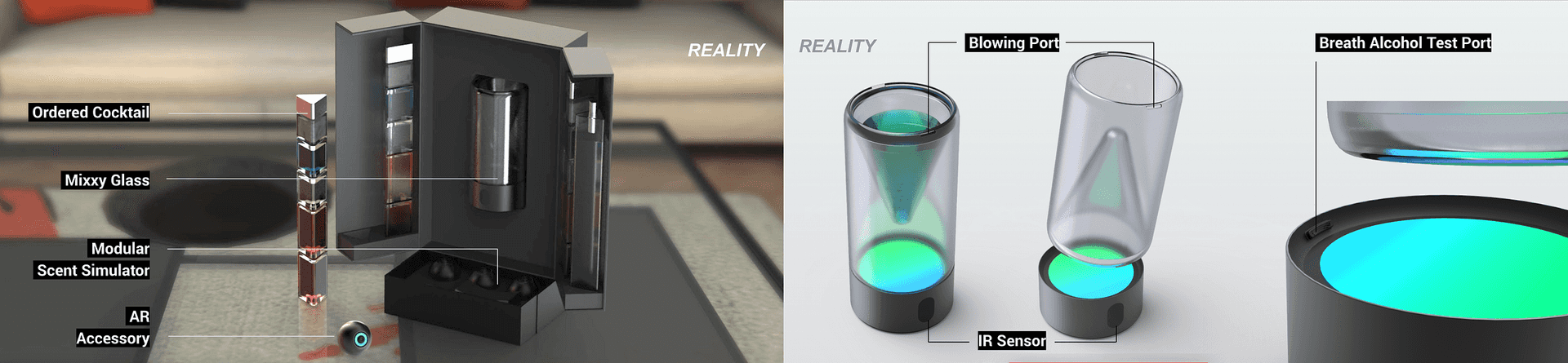

Previously, my team introduced Mixxy, a future clubbing service that uses sensors, simulators, and visual enhancements to recreate the thrill of dancing, drinking, and socializing in a mixed-reality environment. Building on that concept, I now want to dive deeper into one core aspect of the club experience: how users interact with digital objects to order drinks. This naturally extends our original idea—focusing on the drinking experience as outlined by the DSM—by examining how these “normal” club interactions (like browsing a bar menu or chatting with a bartender) might unfold in a mixed-reality setting.

Understanding Trends

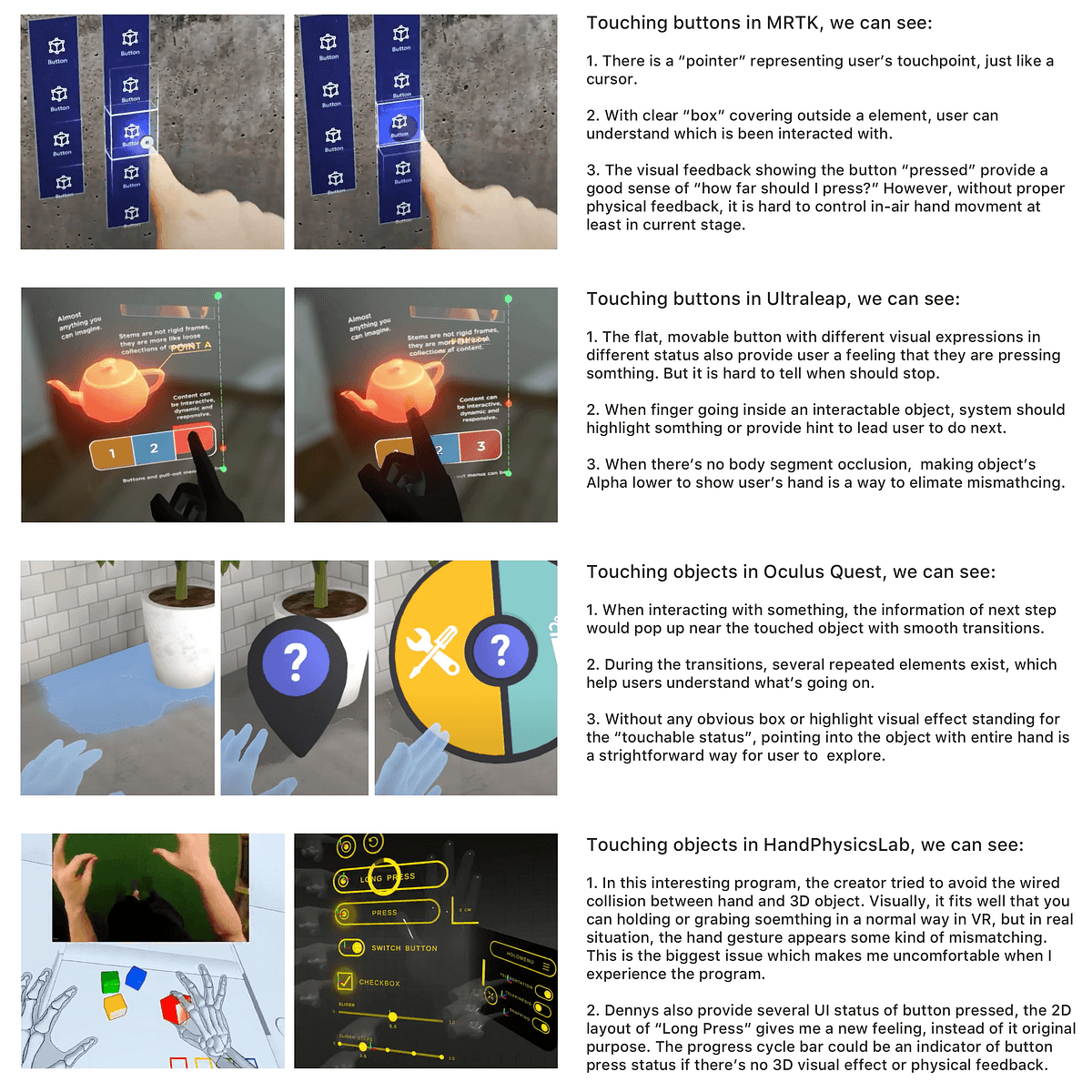

Hand tracking systems—like those introduced by Oculus Quest in 2020—have triggered a surge of VR applications leveraging in-air gestures for richer social engagement and natural interactions. Research from the 2020 IEEE VR Conference suggests that novice mixed-reality users gravitate toward simple, intuitive gestures (e.g., pressing a button with the index finger) and benefit from clear visual affordances like bounding boxes in HoloLens2. Meanwhile, usability studies reveal that missing tactile feedback, limited gesture sets, and visual obstruction between objects remain significant obstacles. Proposed improvements include integrating more feedback mechanisms, simplifying tasks, and combining gestures to create a smoother, more immersive user experience.

Reflection

Designing mixed reality without a HoloLens can be challenging, yet technologies like Manomotion open the door to real-time hand tracking through an iPhone. Leveraging my coding background in Unity and C# has helped me build more meaningful interaction designs, going beyond conventional 2D screen interfaces. In traditional UX for smartphones, I’d explore how touch gestures could benefit users; however, translating those gestures directly into 3D quickly reveals new complexities, such as missing tactile feedback or the need for clear visual affordances (e.g., bounding boxes). I’ve realized that simply mimicking human movements in a 3D space, or floating 2D screens within it, can feel awkward. Instead, I’m experimenting with a “Single Layer” concept—where gestures are intuitive, accessible, and visually guided—to make users’ lives easier in mixed reality. Going forward, I plan to focus on stronger visual cues and transitions that bridge the gap in tactile feedback, ensuring a more natural and immersive experience in hand-tracked MR environments.